Editor’s Note: All opinion section content reflects the views of the individual author only and does not represent a stance taken by The Collegian or its editorial board.

We can’t carbon-offset our way out of hypocrisy.

Colorado State University is handing campus community members two new artificial intelligence mascots: CSU-GPT, positioned as a secure, academic tool for teaching and research, and RamGPT, pitched as a student-facing “agent” to connect campus resources, which is currently on hold.

CSU-GPT, according to the university’s own AI hub, keeps prompts and uploads inside CSU’s Microsoft Azure tenant, offers “chat with files” and lets users create custom agents from their own materials. A professor could upload a syllabus and let students ask basic questions without sending student data into the public internet’s collective blender.

At CSU’s own workshops, RamGPT had been framed as a “generative AI platform” for faculty use and course-tailored agents. The Collegian also described RamGPT as a second chatbot built around agents that are meant to help students find information regarding CSU.

Many people talk about AI as if it’s weightless: It’s just words on a screen, a friendly little bubble that answers questions. But generative AI runs on physical infrastructure: data centers packed with energy-hungry chips that require constant cooling. The electricity demands are climbing, and the water required to cool these systems is not imaginary.

In 2024, U.S. data centers used an estimated 183 terawatt hours of electricity, constituting more than 4% of total U.S. electricity use that year, with projections rising sharply by 2030. Cooling is part of that bill, and water use is part of cooling. Congress’s research arm also noted that a meaningful share of data center energy is increasingly tied to AI workloads.

The university recently celebrated earning a fifth consecutive STARS Platinum rating, an achievement built on tracking, measuring and credibility. You cannot spend decades building that trust and then casually roll out not one, but two campus AI platforms without publicly grappling with its cost. That’s not innovation; that’s reputational malpractice.

The obvious retort is to say, “But CSU-GPT keeps data inside CSU, so it’s safer.” True. Privacy and governance matter in academia, and CSU-GPT is explicitly marketed as governed by CSU’s Responsible AI framework. CSU leaders have also framed CSU-GPT as a way to get AI “into our students’ hands” and to lead rather than hesitate.

But “safer” is not the same as “sustainable.” If CSU provides an officially branded AI tool, usage can explode precisely because it feels endorsed. MIT’s reporting on generative AI’s environmental impact makes the uncomfortable point that ease-of-use plus a lack of user incentives to reconsider the impact of their use allows demand to continually increase.

If we build campus AI to protect academic integrity and institutional reputation, we may accelerate the very environmental harms CSU claims to fight.

So why build two?

The best defense is that they serve different audiences: CSU-GPT for complex academic work and RamGPT for student support.

CSU already claimed CSU-GPT can create and share custom agents based on documents and websites, with access controls. If that is true, CSU does not need RamGPT to “connect resources across campus.” That’s an agent. That’s a curated knowledge base. That’s a well-designed web portal with a good search bar. Calling it RamGPT doesn’t make it smarter; it makes it marketable.

Don’t duplicate branding to manufacture the illusion of novelty.

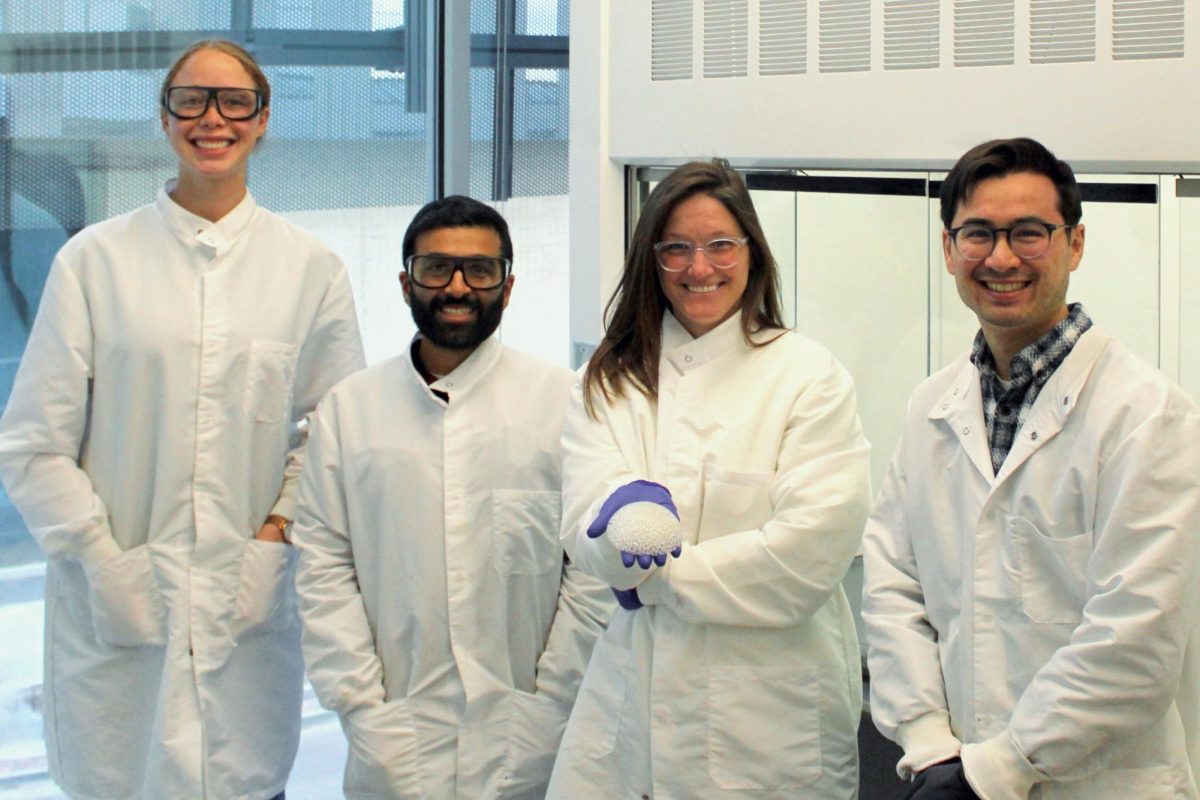

According to reporting by Tech Xplore, CSU paid Microsoft $120,000 in 2025 and is slated to pay $142,000 in 2026 and 2027 for these initiatives. Even if you think those numbers are defensible, they demand a harder question: What is CSU buying — educational value or the appearance of staying relevant?

Hypothetically, there is a world where CSU-GPT is a genuinely useful tool with a defensible purpose: to reduce misinformation on public platforms through teaching materials and interacting with students within CSU governance — and it can be shaped by faculty who actually understand their courses.

RamGPT, as described, is not in that world. It’s a second layer of AI between students and information that already exists. It risks turning basic campus literacy into a chat prompt and outsourcing habits like reading a webpage, scanning a syllabus or learning how to navigate a university.

If CSU insists on building internal AI, responsible leadership would look like restraint, consolidation and transparency about its real costs, not a rollout strategy that feels like, “We need one, too” tech chasing. Students are not impressed by shiny dashboards if they come at the expense of the environmental commitments that attracted them here in the first place. Many chose this university precisely because it markets itself as sustainability-first. Betting institutional credibility on energy-intensive tools while simultaneously branding yourself as climate-conscious isn’t forward thinking.

CSU has to decide what its reputation is worth. If we’re going to talk about reputation, we should examine it in full: CSU is expanding its partnership with Microsoft at a moment when Bill Gates’ name is appearing in documents related to Jeffrey Epstein. Universities do not operate in isolation from those reputational currents.

At the same time, students have watched the university tighten restrictions around free speech, expand highly visible branding campaigns, like expensive and useless billboards that push against municipal laws, and move forward on major initiatives with limited transparent consultation.

The pattern matters. It communicates an administration confident in its authority, but less so in its willingness to be questioned.

Here is the reality: Students have options. When tuition approaches $30,000 a year, families are not just buying coursework; they are buying trust in governance. If students begin to feel unheard, over-managed or marketed to instead of engaged, they will not simply complain. They will enroll elsewhere.

If CSU wants to be taken seriously as a sustainability-first, student-centered and ethically grounded university, it cannot afford decisions driven by momentum rather than principle.

If CSU-GPT becomes the next shiny convenience, we’re left with a familiar story: a university that models principles in the classroom but not in its decisions.

Reach Maci Lesh at letters@collegian.com or on social media @RMCollegian.

RonT • May 6, 2026 at 11:19 am

The CSU Extension website changes have made searching and getting answers newer impossible. Even a minor search for ‘Ground Squirrels’ comes back blank!! Meanwhile, I’m looking at a saved PDF of ‘Managing Wyoming Ground Squirrels’ Fact Sheet No. 6.505 by Andelt and Hopper – which appears to have disappeared from the Extension website.

I’ve heard this is because all CSU websites now must be ADA compliant or some such nonsense … so if some great informative fact sheets aren’t ADA compliant, then they aren’t available to anyone anymore …